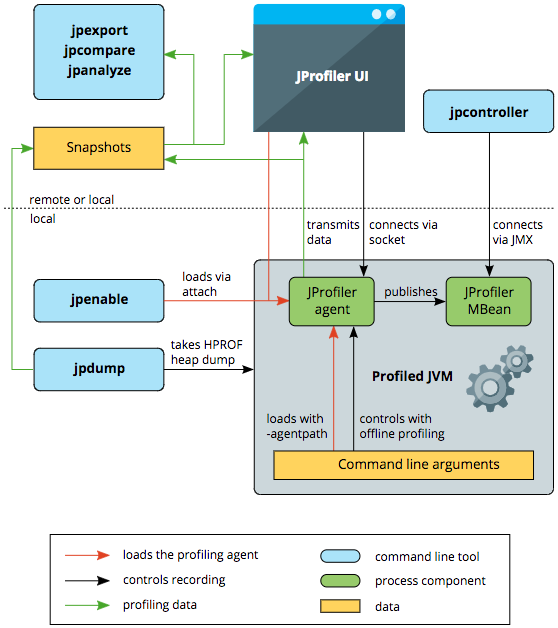

A java agent : By incorporating a Java agent into our profiler, users can collect various metrics (e.g.Apache Kafka) to other systems for further analysis: The JVM Profiler is composed of three key features that make it easier to collect performance and resource usage metrics, and then serve these metrics (e.g. Inspired by some of these tools, we built our profiler with even more capabilities, such as arbitrary Java method/argument profiling. There are some existing open source tools, like Etsy’s statsd-jvm-profiler, which could collect metrics at the individual application level, but they do not provide the capability to dynamically inject code into existing Java binary to collect metrics. To address these two challenges, we built and open sourced our JVM Profiler. Moreover, implementing a more non-intrusive metrics collection process would enable us to dynamically inject code into Java methods during load time. To keep up with the perpetual growth of our data infrastructure, we need to be able to take the measurements of any application at any time and without making code changes. Since NameNode client codes are embedded inside our Spark library, it is cumbersome to modify its source code to add this specific metric. For example, if we experience high latency on a Hadoop Distributed File System (HDFS) NameNode, we want to check the latency observed from each Spark application to ensure that these issues haven’t been replicated.

In their current implementations, Spark and Apache Hadoop libraries do not export performance metrics however, there are often situations where we need to collect these metrics without changing user or framework code. Make metrics metrics collection non-intrusive for arbitrary user code To be able to collect metrics in this environment, the profiler needs to be launched automatically with each process. Additionally, we do not know when these processes will launch and how long they will take. We needed a solution that could collect metrics for each process and correlate them across processes for each application. Our existing tools could only monitor server-level metrics and did not gauge metrics for individual applications. In a distributed environment, Spark applications run on multiple servers. thousands of executors) running across many servers, as illustrated in Figure 1: Figure 1. In a distributed environment, multiple Spark applications run on the same server and each Spark application has a large number of processes (e.g. In particular, we wanted the ability to: Correlate metrics across a large number of processes at the application level As our tech stack grew, we quickly realized that our existing performance profiling and optimization solution would not be able to meet our needs. On a daily basis, Uber supports tens of thousands of applications running across thousands of machines. Read on to learn how Uber uses this tool to profile our Spark applications, as well as how to use it for your own systems. Though built for Spark, its generic implementation makes it applicable to any Java virtual machine (JVM)-based service or application. To mine these patterns without changing user code, we built and open sourced JVM Profiler, a distributed profiler to collect performance and resource usage metrics and serve them for further analysis. While Spark makes data technology more accessible, right-sizing the resources allocated to Spark applications and optimizing the operational efficiency of our data infrastructure requires more fine-grained insights about these systems, namely their resource usage patterns.

To help us better leverage this data, we manage massive deployments of Spark across our global engineering offices.

For Uber, data is at the heart of strategic decision-making and product development. Computing frameworks like Apache Spark have been widely adopted to build large-scale data applications.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed